World models will not fundamentally change the world. Here I've put to paper some thoughts expanding on the topic from a fantastic discussion I had with Grace Kasten last Friday over brunch.

Clam linguine + fruit and milk granola

Clam linguine + fruit and milk granola

Worlds and Models

What is a world model? NVIDIA provides a great introduction.

Google's Genie and World Labs' Marble and Moonlake's GGE are all examples of foundational world models. Lila Sciences and Periodic Labs are general-purpose research labs with the goal of finding new scientific advancements through AI.

As humans, we are independent agents interacting with what is essentially a very large hidden Markov model that represents the environment we live in. Theoretically, the universe would really be a fully deterministic world if you could somehow map every probability distribution perfectly.

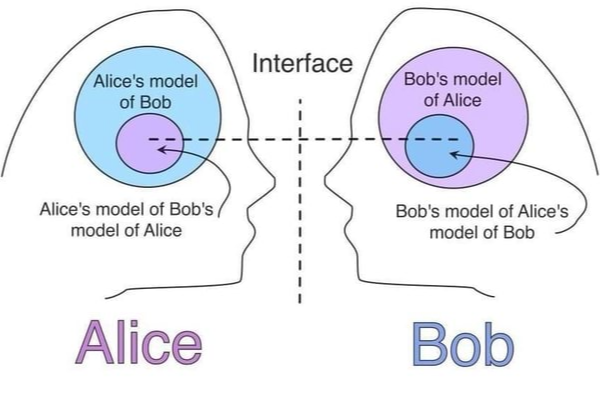

I wrote briefly about the finding that LLM internal states become isomorphic to actual HMM state spaces here. To reiterate, this is great because models can develop useful internal representations of the world's dynamics. However, this also implies that foundational simulation models can only be perfect if reality is fully knowable. Consider this from an agent's perspective: you cannot perfectly model agents who are themselves modeling you.

Alice's world model of Bob's world model of Alice's world model of Bob's...

Alice's world model of Bob's world model of Alice's world model of Bob's...

This recursive modeling problem means that truly universal simulation hits a hard ceiling of irreducibility1. You cannot compress the behavior of a complex chaotic system. No matter the architecture, world models face this intrinsic limitation. While diffusion models handle uncertainty better than next-token generators by producing distributions over outcomes, rather than committing to a single sequential prediction, no architecture can fully escape this constraint.

The question then is whether approximate world models are useful enough to justify the investment—and the answer depends entirely on context.

In Minecraft

Fundamental Research Labs (fka Altera) led an experiment they dubbed Project Sid, creating a self-acting world of individual agents (let's call them villagers) in Minecraft. The results were impressive: villagers learned to coordinate complex tasks together, interrupted their normal tasks to perform search and rescue when discovering one went missing, and spread civilization and mock religion throughout their world. But there were a few caveats, the largest being that villagers required divine, top-down directives to coordinate their goals.

Eventually, FRL scrapped the project in favor of building an AI Excel plugin meant to become a precursor of an automated computer use agent. Clearly something went wrong—this is like NASA scientists suddenly deciding to build an orange juicer.

That's the principal limitation: FRL could simulate specific types of worlds really well in Minecraft, but that was it. They couldn't apply this newfound knowledge to other fields in any meaningful way.

Simile is a neolab2 which raised $100M last week to simulate human behavior using the same premise that Project Sid was based on: attempting to simulate individual behaviors in an environment and scaling that to generate a constantly evolving world which represents the whole of human interaction. Despite their claim, they are NOT "the first AI simulation of society" (cf. FRL). The simulation field continues to retread the same tired ideas under new branding without meaningful innovation.

Practice does not make Perfect

Lila is an applied AI neolab which runs a physical wet lab. Their stated mission is to allow an LLM to autonomously run random science experiments until it has gleaned (not grokked!) a sufficient amount of data about the world, use that data to train a new LLM, and repeat the cycle until a very smart iteration starts finding unexplored properties of certain materials.

What all these projects have in common is the ambition to simulate everything using an open-ended, universal world model that tries to capture the full complexity of an environment. Lila is a perfect example of suffering from a lack of direction. These world models are not bounded problems with a clear success criterion; they are attempts to brute force emergence, and thus will consistently fail to produce applicable results. Fundamentally, world models are limited (beneficially) by the specificity of their design.

Imagine a high schooler running billions of silly experiments like "does oil float on water?", versus a PhD focused very specifically on room-temperature semiconductors. Who do you think will make any sort of concrete discovery in the next millennium? That is the difference between Lila and real science.

Less Is More

World models are clearly useful in bounded domains. Media firms have used them to simulate user reactions and expected ratings of advertisement campaigns to great success. Non-deterministic human randomness can be approximated to a high degree of accuracy through large-scale statistical modeling. World models work great in a bounded domain where the accuracy bar is "good enough" rather than "perfect".

For a long time, robotics has been severely limited by data. Recently, π*0.6 by Physical Intelligence has in effect solved the data problem3 for general-purpose manipulation tasks. 0.6 is a generalizable VLA which attempts to learn intuitions about how its physical actions affect the environment through application-specific priors. They key is its specificity. Similar to JEPA, it doesn't try to model the totality of a world; it models the interaction of friction, gravity, and contact from a singular agent's perspective.

I firmly believe the ideal forms of robots are hyper-specialised, non-humanoid modular machines which perform specific tasks extremely well. The reason is simple: most real-world tasks demand nearly perfect accuracy, and general-purpose systems cannot deliver it with any semblance of efficiency.

| task | result |

|---|---|

| 90% accurate dishwashing | unwashed dish |

| 90% accurate driving4 | car crash |

| 90% accurate surgery | dead patient |

A dishwasher doesn't need a world model to understand how to clean a dish. It just needs to know how to scrub. The value of robotics lies not in building agents that understand the world as a complete entity, but in building machines that execute their narrow function with extreme reliability and efficiency. This is the polar opposite of a foundational world model.

World models work great when built up from the minutiae of an agent's actual experience and bounded to a specific domain. They fail otherwise—when modeling is imposed top-down, or when bottom-up experimentation has no edge to push against.

Like humanoid robotics, foundational world models attract immense funding because they're glamorous. On paper, they hold great potential. But the models which will actually change the world are the ones who do one thing, do it well, and get better every time they do it. That's not glamorous. It's just how progress works.

ky

Footnotes

-

To simulate physics perfectly requires more data than the entire universe can store and truly understanding of every law of the universe. An interesting counterpoint to the second is that world models are an attempt to find these laws through observation, but blind exploration yields no valuable discoveries about the intrinsic aspects of nature. ↩

-

I consider any AI research lab that has raised in excess of $100M with no product, no revenue, and no transparency a neolab. ↩

-

I strongly discount the role of teleoperation in the future of robotics, as it does not generalize to new environments and is not autonomous. ↩

-

Seeing Like a Sedan by Andrew Miller perfectly captures my view on autonomous driving.

In summary: lots of guardrails. ↩